AI Use Policy

Adaptimist Insights uses AI in a practical, bounded way.

The aim is not to automate human understanding away. The aim is to make certain kinds of exploration, explanation, drafting, and routing more accessible without pretending that a model knows more about a person or situation than it really does.

Where AI may be used

AI may be used in parts of the site such as:

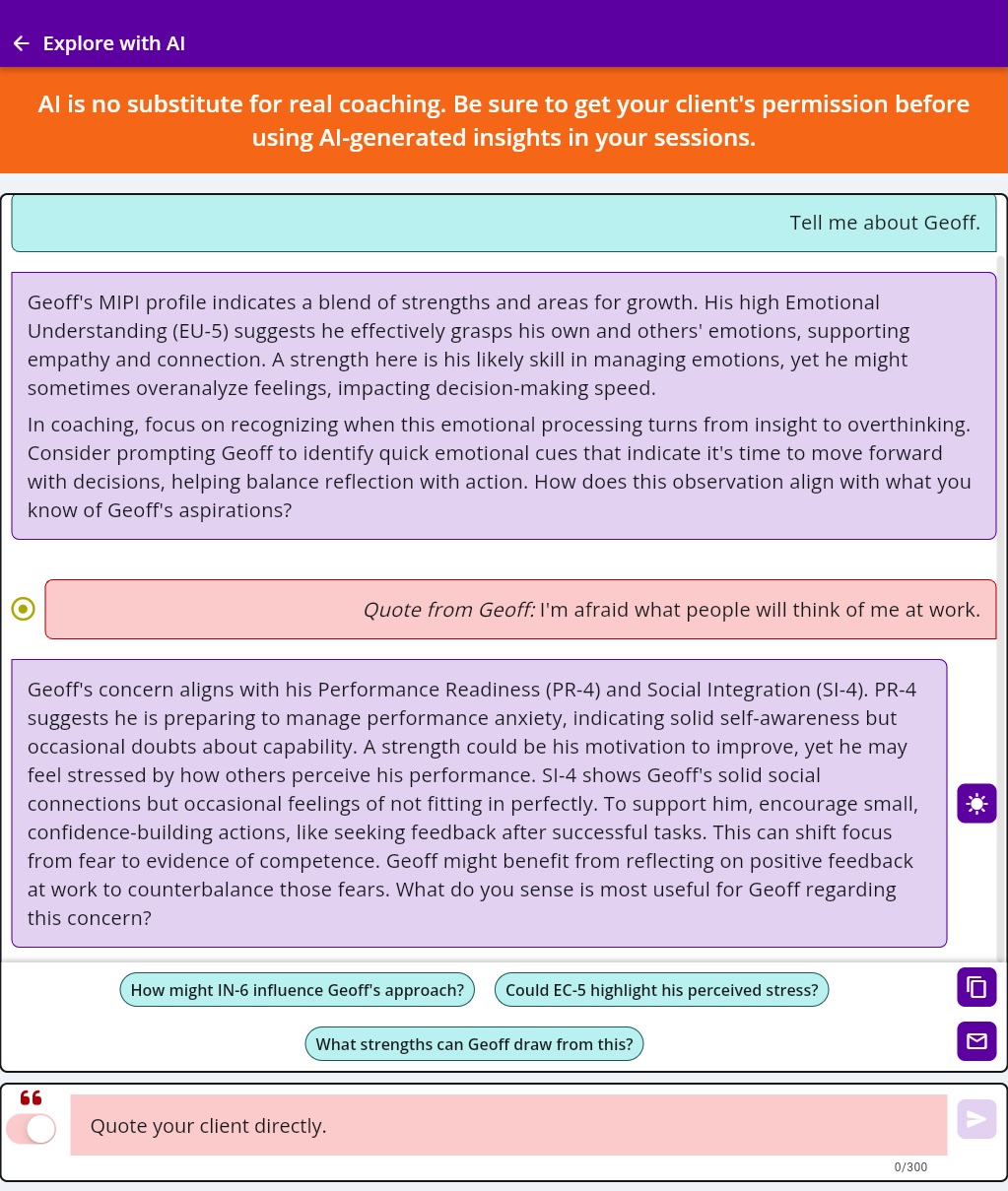

- conversational explainers

- guided assistants

- drafting tools

- communication rehearsal tools

- problem-framing or problem-first pathways

- internal routing or workflow systems that help deliver these experiences

Some AI experiences are page-aware, meaning the system may receive structured context about the page you are on so it can respond in a more relevant way.

What AI is for here

On this site, AI is used to help with things like:

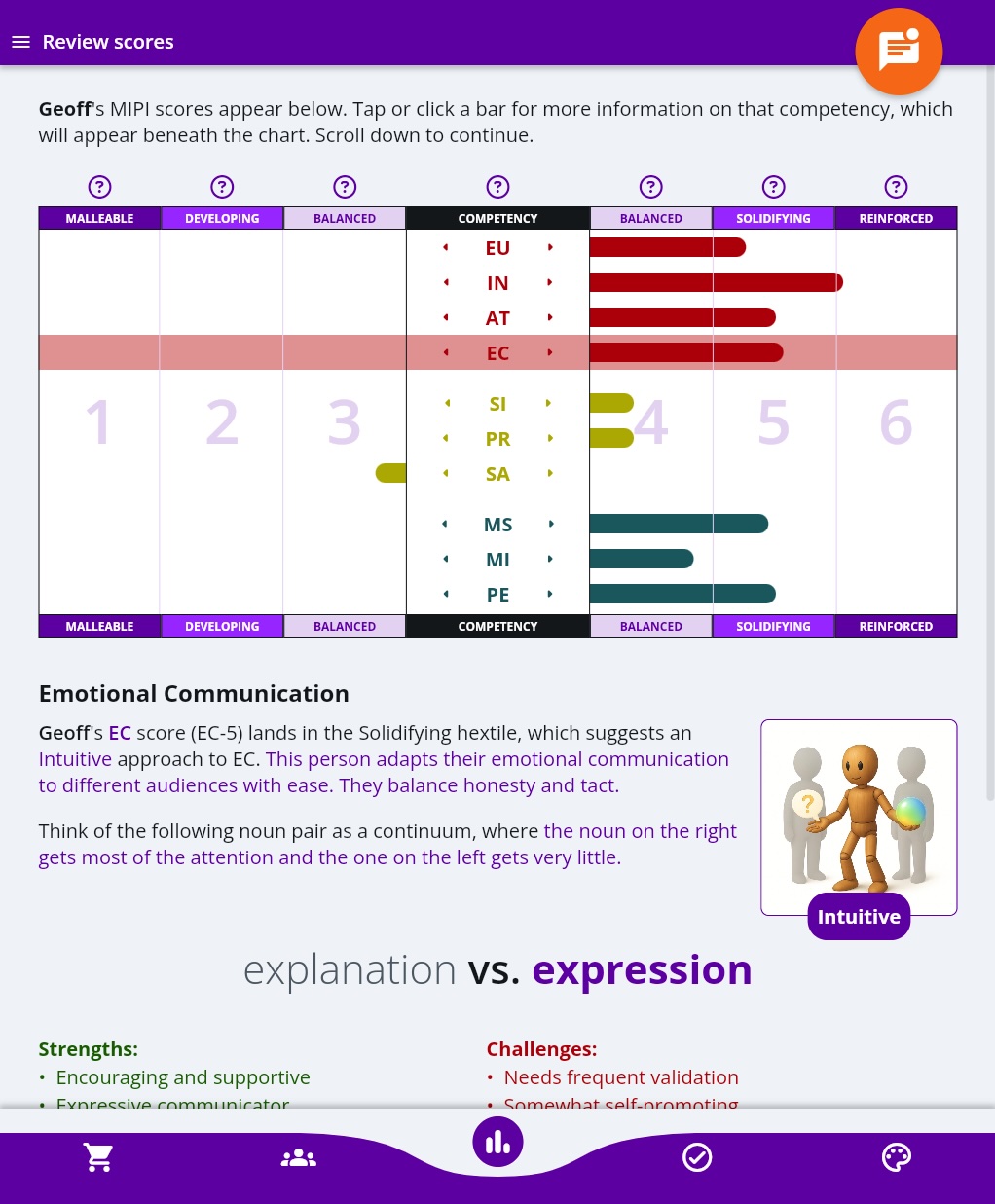

- explaining concepts in more accessible language

- helping users think through a situation

- generating draft wording for reflection or communication

- supporting structured learning experiences

- connecting users to the next useful doorway in the ecosystem

In other words, AI is being used as a tool for clarification and interaction, not as an engine of authority.

What AI is not for here

AI on this site is not presented as:

- a diagnosis system

- a mental health professional

- a legal advisor

- a crisis counselor

- a truth machine

- a substitute for professional judgment

- a guarantee about what another person will do

If a situation is high-stakes, urgent, abusive, safety-critical, or legally sensitive, AI output should not be treated as enough on its own.

Design principles

The corpus behind this site points to a few non-negotiable principles, and those principles shape how AI is used:

- do not imply hidden truths about the user

- do not overclaim certainty

- do not disguise exploration as diagnosis

- use boundary language where relevant

- support reflection without pretending to replace human support

- privilege useful structure over theatrical intelligence

These principles matter more than whether an output sounds fluent.

Limits of AI output

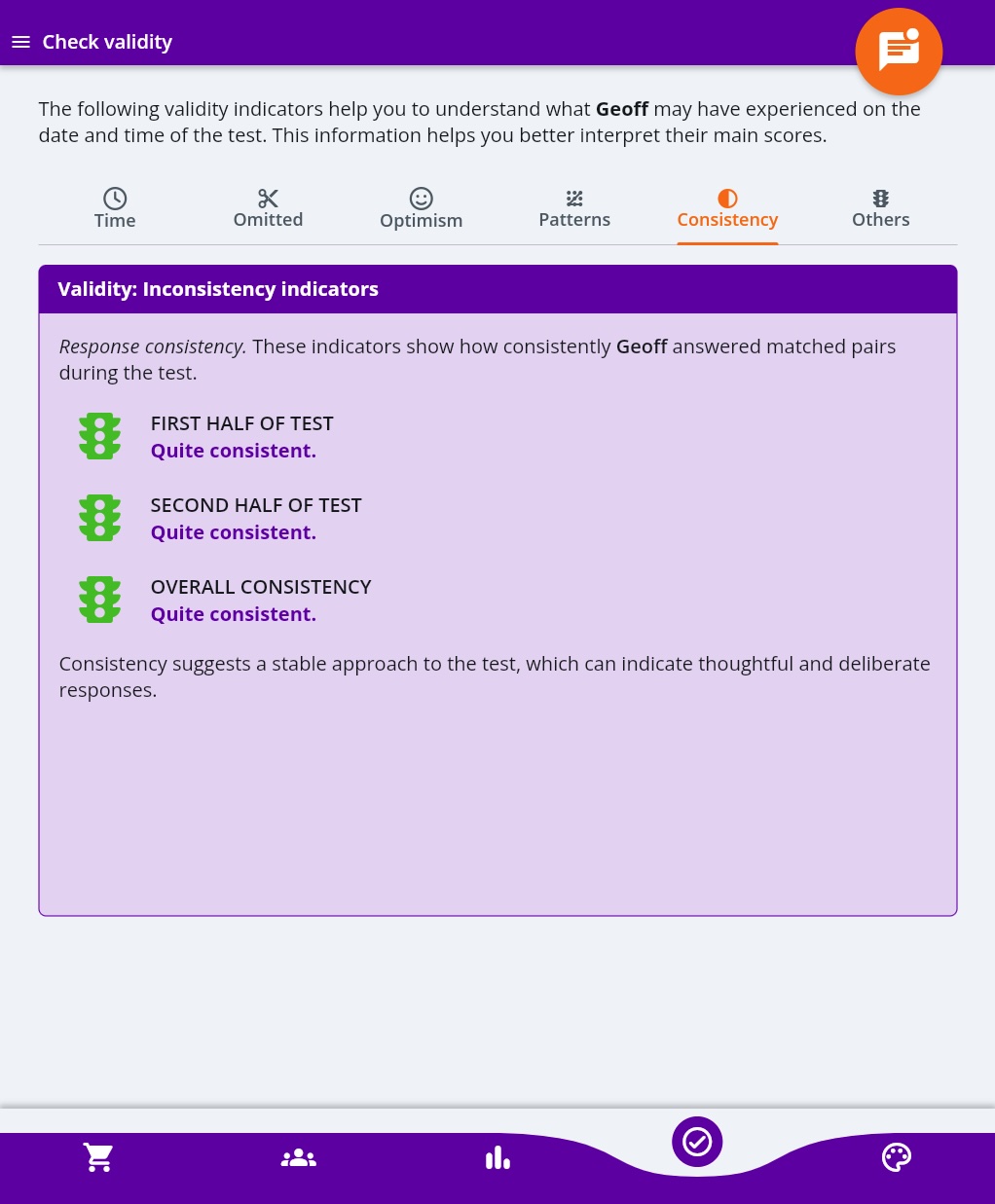

AI-generated output can be helpful and still be wrong.

Responses may:

- oversimplify a situation

- miss important context

- reflect bias or pattern error

- produce language that sounds more certain than it should

- fail to recognize when a human conversation or professional support is the better next step

For that reason, AI outputs on this site should be treated as prompts for reflection, drafting, learning, or next-step thinking, not as final answers.

Data handling in AI features

When you use an AI-assisted feature, the information you submit may be sent through the technical systems used to generate the response. Depending on the feature, this may include website infrastructure, workflow automation, AI platforms, or logging systems used to operate and secure the experience.

We aim to keep AI requests structured and proportionate to the purpose of the feature. We also use technical controls such as validation, throttling, and security checks to reduce misuse and protect the system.

For more detail about information handling, see the site Privacy Policy.

Human judgment still matters

The most important point is simple: AI can help surface options, structure, and language, but it does not remove the need for judgment.

That is especially true in situations involving:

- safety

- abuse or coercion

- crisis

- law or policy

- employment consequences

- clinical questions

- culturally sensitive or deeply relational decisions

In those situations, human context is not extra. It is central.

How we want users to approach AI here

The best use of these tools is usually:

- as a first pass, not a final verdict

- as a rehearsal space, not a substitute for reality

- as a way to get clearer, not a way to avoid thinking

- as support for reflection, not surrender of agency

Changes to this policy

As AI features evolve, this policy may be updated to reflect new tools, workflows, or safeguards.

Plain-language summary

Adaptimist uses AI to help people explore, draft, understand, and navigate. It is used as a bounded tool, not as a source of hidden authority. If the stakes are high, the limits matter more than the fluency.